Radiation Oncologist Perceptions and Utilization of Digital Patient Assessment Platforms

Images

Abstract

Background: Patient engagement is increasing in the presence of digital patient assessment platforms, or physician rating websites. Despite this rapid growth, data remains insufficient regarding how these evaluations impact radiation oncologists.

Objectives: The purpose of this study was to assess radiation oncologists worldwide on their awareness and noted effects of digital patient assessment platforms.

Methods: An electronic survey was delivered to 6,199 members of the American Society of Radiation Oncology. Subjects were radiation oncologists practicing throughout the world. The survey consisted of 14 questions focused on demographics, practice details, patient volume, institutional utilization of patient reviews, and perceptions of radiation oncologists on health care reviews provided by patients.

Results: There were 447 responses from practicing radiation oncologists in total, 321 (72%) of which are in the US. Most respondents (228; 51%) either agreed or strongly agreed that patients consider online reviews when deciding which physician to visit. Of all respondents, 188 (42%) reported that their institution checks their online feedback, whereas 157 (36%) and 99 (22%) respectively reported not knowing, or to their knowledge their institution does not check their online feedback. Respondents who saw more than the average number of consults per week were significantly more likely to receive negative feedback (P = 0.005). Forty-five percent of respondents agreed or strongly agreed that online virtual assessment tools contribute to physician burnout. Respondents (100; 22%) who received inappropriate or misdirected feedback were significantly more likely to report that virtual reviews contribute to burnout (P = 0.001).

Conclusions: Radiation oncologists need to be aware that self-reported patient assessments are a data point in the quality of a physician and health care establishment. To best ensure appropriate feedback of a physician’s capabilities as a doctor, leadership and employee alignment for patient experience are now more important than ever.

In modern health care, patients’ engagement with health care selection and evaluation is growing. Patients’ expectations are being shaped by the customized and convenient experiences they have grown accustomed to in other industries. As a result, they are demanding greater capabilities including more engaging digital experiences.1,2 This increase in digital patient engagement is evident in the presence of digital public physician rating platforms, or physician rating websites, alongside the more conventional institutional feedback surveys and third-party survey vendors approved by the Centers for Medicare & Medicaid (CMS). Although patient experience does not always correlate with quality care, patient experience measures can address attributes of care that improve quality. Eliciting the patient’s perspective is considered essential in appropriate shared decision-making, understanding safety and confidentiality information, and understanding how care impacts a patient’s life.3

Digital physician rating platforms in the form of various sites allow patients to evaluate their physicians in a public forum with the option for free text responses. In particular, the presence of physician rating websites has grown considerably with increasing numbers of practicing physicians in the US searchable on at least one site.4 In a survey conducted by Deloitte, 23% of respondents in 2018 (compared to 16% to 18% in 2015) had looked up a report card for a physician in the past year, and 53% intended to in the future.2,4 In searching for care, 20% of polled patients listed “high user reviews from other patients” as one of their most important factors.2 In a 2015 study by Mayo Clinic, 28% of patients strongly agreed that a positive review would cause them to seek care from that physician, and 27% indicated that a negative review would cause them to avoid care from that physician.4 This suggests that a sizeable fraction of the population places considerable weight on physician reviews.

Despite the rapid growth of digital platform services for patients to rate physicians and hospitals, insufficient data has been gathered about how these evaluations are collected, impact health care providers, and are interpreted. In some cases, negative online reviews have been associated with nonphysician variables.5 In addition, physicians with negative online feedback compared with those without negative online feedback had similar scores on CMS-approved surveys.5 Naturally, physicians may be concerned about their online reputation, which may be affected by nonquality metrics.6,7

As physicians in different specialties may serve different patient populations, it is important to stratify perception of these surveys by specialty. In fact, one study found overall patient satisfaction scores varied by specialty, with radiation oncology scoring the highest amongst medical specialties.8 In this report, radiation oncologists in the US and abroad were surveyed about their perception of patient feedback surveys. This study aims to provide insights into how radiation oncologists use and view patient evaluations.

Methods

An electronic survey was delivered to 6,199 members of the American Society for Radiation Oncology (ASTRO) using a list compiled by ASTRO leaders. While membership includes radiation oncologists, physicists, radiation therapists, dosimetrists, and nurses, inclusion criteria for survey subjects were radiation oncologists currently practicing throughout the world. There were no exclusion criteria. The survey was developed by the authors and consisted of 14 questions focused on perceptions of radiation oncologists on patient health care reviews. Demographic questions collected information on age, gender, practice location, practice type, and patient volume. Five-point Likert scales were used to assess radiation oncologists’ opinions on patient utilization of online reviews when deciding which doctor to visit and contribution of virtual reviews on physician burnout. Additional questions were designed to assess institutional use of patient-filled assessments and physician opinions of reviews. All responses to the survey questions were analyzed. Descriptive statistics were used to summarize the results. Data were analyzed in R Statistics (version 3.6.1). Fisher’s exact test was analyzed to compare proportions and Wilcoxon rank sum test was used for continuous values. A generalized linear model with a suitable link function was implemented when multiple explanatory variables were involved. Statistical significance of alpha level was determined using a priori criteria P < 0.05. This study (18-8011) was approved by an institutional review board.

Results

Characteristic Data of Respondents

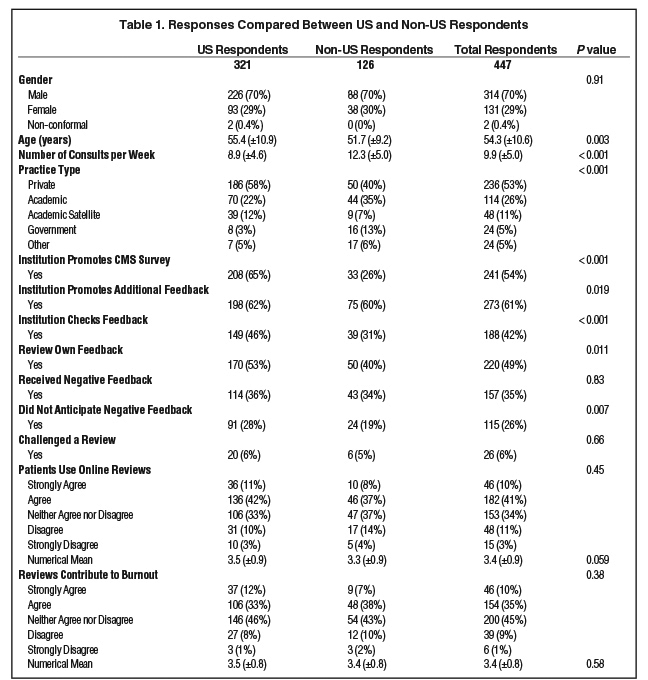

There were 447 respondents, 321 (72%) of whom were practicing in the US. The majority 314 (70%) identified as male, 131 (29%) identified as females and 2 (1%) identified as other gender. The mean age was 54 years, ranging from 35 to 86 years. Of all respondents, 126 (28%) were practicing outside of the US, 87 (20%) were from the Northeast, 83 (19%) were from the Midwest, 78 (17%) were from the South, and 73 (16%) were from the West. Figure 1 shows the estimated weekly volume of radiation oncology consults dichotomized into all respondents and US respondents. Table 1 compares demographic information of US respondents and non-US respondents.

Types of Health Care Reviews

Of all respondents, 200 (45%) reported that their institution promotes the Press Ganey vendor survey, 111 (25%) reported not knowing whether their institution promotes a CMS-approved health care survey, 94 (21%) reported that their institution does not promote a CMS-approved health care survey, and 41 (9%) reported that their institution promotes a CMS-approved health care survey other than that by Press Ganey. Table 1 compares answers of US respondents and non-US respondents.

Regardless of CMS-approved survey usage, 342 (77%) of respondents reported that their institution encourages patient feedback. Other types of promoted patient feedback beyond the CMS-approved surveys consisted of paper forms (205; 46%), online rating or digital platform sites (98; 22%), and a supported social media page (39; 9%). The remaining respondents either reported that their institution did not solicit additional patient feedback (92; 21%) or were not aware of additional options for soliciting patient feedback (89; 20%). Those practicing in the US were significantly (P = 0.02) more likely to report that their institution encouraged additional patient feedback vs those practicing outside the US (Table 1).

Of all respondents, 188 (42%) reported that their institution checks their online feedback, 157 (36%) reported not knowing, and 99 (22%) reported that, to their knowledge, their institution did not check their online feedback. Online feedback checking by institutions in the US was more common than that by institutions outside the US (46% vs 31% respectively, p < 0.001). There was no significant association between institutions checking online feedback and practice type (P = 0.07).

Awareness of Respondents

Of all respondents, 225 (51%) reported not reviewing their online feedback, 76 (17%) reported checking monthly, 59 (13%) reported checking yearly, 50 (11%) reported checking less than once a year, 24 (5%) reported checking weekly, and 11 (3%) reported checking daily. Respondents who reported checking their feedback (220) were in the following settings: 60% at private practices 18% in academic, 12% in academic satellite, 5% in government, and 5% in other practices. For respondents who reported not checking their feedback (225), the distribution was 45.3% private, 33.3% academic, 9.3% academic satellite, 6.0% government, and 6.0% other (P = 0.002). Respondents who check their feedback (220) were more likely to be working at an institution that promotes a CMS-approved survey (62%) than working at institutions that do not promote (18%) or not knowing if their institutions promote (20%) CMS-approved surveys (P = 0.02).

Most respondents either agree (182; 41%) or strongly agree (46; 10%) that patients consider online reviews when deciding which physician to visit. The remaining neither agree nor disagree (153; 35%), disagree (48; 11%), or strongly disagree (15; 3%). When the categorical answers were replaced with a numerical scale 1 to 5 with 1 being strongly disagree and 5 being strongly agree, the mean response for those practicing at institutions that check online feedback was 3.7 ± 0.9, and the mean for those practicing at institutions that do not check online feedback was 3.2 ± 1.0 (P < 0.001).

Negative Feedback in Health Care Reviews

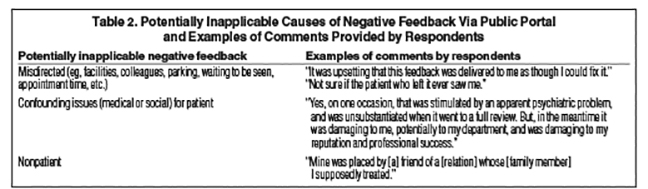

Sixty-five percent (290) of respondents did not receive negative online feedback. Of the 157 respondents who received negative feedback via public portal, 54 (34%) answered that they were concerned the feedback was confounded by the patient’s other active medical or social issues, 52 (33%) anticipated the feedback, 25 (16%) believe the feedback was incorrectly directed, and 26 (17%) believe the feedback was placed by a nonpatient. See Table 2 for additional comments.

The majority (131; 83%) of respondents who received negative feedback did not challenge an online review by a patient. The respondents who challenged an online review (26; 17%) were significantly more likely to review their own feedback (P = 0.04) and more likely to have received negative feedback (P < 0.001). A generalized linear model with a logistic link was performed and found that respondents who saw more than the average number of consults per week were significantly more likely to receive negative feedback (P = 0.005). Age, gender, and region of practice were not found to be significant factors.

Effect of Reviews on Respondents

Most respondents (200; 45%) answered that they neither agree nor disagree that virtual health care reviews contribute to burnout. More respondents answered that they agree (154; 35%) and strongly agree (46; 10%) vs disagree (39; 9%) or strongly disagree (6; 1%) that virtual health care reviews contribute to burnout. When the categorical answers were replaced with a 1 to 5 scale with 1 being strongly disagree and 5 strongly agree, the mean overall response was 3.5 ± 0.8. The mean response for those who did not anticipate negative feedback was 3.6 ± 0.8, and the mean response for those who anticipated negative feedback was 3.2 ± 0.9 (P = 0.008). Respondents (100; 22%) who received inappropriate or misdirected feedback were significantly more likely to report that virtual reviews contribute to burnout (P = 0.001). Reviewing one’s own feedback (P = 0.46), frequency of reviewing one’s own feedback (P = 0.25), and receiving negative feedback (P = 0.25) were not found to be significantly correlated with the belief that virtual health care reviews contribute to burnout.

Discussion

The results reported here provide insights into the types of health care reviews used in evaluating radiation oncologists as well as how physicians view these reviews. Most respondents either agreed or strongly agreed that patients consider online reviews when deciding which physician to visit. Of all respondents, more reported that their institution checks their online feedback than those who reported not knowing, or to their knowledge, their institution did not check their online feedback. US health care organizations and radiation oncologists were more likely to check patient feedback compared to non-US organizations and radiation oncologists. In general, radiation oncologists who saw more than the average number of consults per week were more likely to receive negative feedback. More radiation oncologists agreed than disagreed that virtual assessment sites contribute to physician burnout. Additionally, respondents who received inappropriate or misdirected feedback were significantly more likely to report that virtual reviews contribute to burnout.

Surprisingly, 25% of surveyed radiation oncologists were not sure whether their institution promoted a CMS-approved health survey – a finding that may be explained by physicians being unaware which surveys are mandated or simply due to physicians not being involved in reviewing patient feedback. Since 28% of respondents reported practicing outside of the US, it is reasonable that 21% of respondents reported that their institution did not promote a CMS-approved health survey. Notably, both physicians in the US and abroad received similar rates of negative feedback (36% and 34% respectively, P = 0.83). US health care organizations were more likely to check patient feedback compared to non-US organizations (46% vs 31% respectively, P < 0.001). US radiation oncologists were also more likely to review their feedback compared to their counterparts abroad (53% vs 40%, respectively, P = 0.01), which may be due to cultural differences. The US, for instance, may practice a form of medicine that places more value on patient feedback.9-11

How physicians interpret patient evaluation feedback needs to be further investigated. As demands on physicians’ time continues to grow, shorter appointments and less face-to-face time with increasingly complex patients may contribute to negative patient reviews. In fact, a generalized linear model of the data in this survey suggests that radiation oncologists who saw more than the average number of consults per week were more likely to receive negative feedback (P = 0.005). Whether negative patient feedback contributes to physician burnout is a crucial question that needs to be answered since burnout has been associated with worse patient outcomes.12 When queried whether negative reviews contribute to burnout, the majority of physicians in the U.S. and abroad gave neutral answers of neither agreeing nor disagreeing. However, responses trended toward negative reviews contributing to burnout with more physicians either agreeing or strongly agreeing that negative reviews contribute to burnout than those disagreeing or strongly disagreeing. Additionally, respondents who received inappropriate or misdirected feedback were significantly more likely to report that virtual reviews contribute to burnout (P = 0.001).

While CMS-approved surveys were constructed to limit bias and validate a true patient experience, digital patient assessment platforms are less regulated and their accessibility is greater than conventional patient assessment tools. Some physicians reported that negative reviews attributed to them were due to factors beyond their control (Table 2) such as patients being upset with support staff, wait times, and hospital facilities rather than the physician interaction. In fact, staff engagement, such as communication and responsiveness, appointment ease, and discharge information are strongly associated with perceived good clinical quality and drivers of patient experience.3,13 In addition, physicians in our survey reported receiving negative feedback by relatives or acquaintances of patients who did not directly receive care from the physician. Misdirected negative reviews can hurt physician morale and hinder the quality improvement process.14

Improving the patient experience can likely address attributes of care that promote quality, suggesting that improvements in patient experience scores might be associated with increased clinical quality. However, misdirected or misappropriated ratings remind us that patient expectations do not always correlate with relevant clinical quality indicators.13 Subjectivity is inherent in health care as patient-reported experience measures are inherently subjective. Factors as diverse as demographic characteristics, social status, health, and personality can influence the patient experience.15 Although respondent randomization in CMS-approved surveys accounts for these factors, digital health care rating platforms give no indications of controls. Certain facets of care – such as a radiation oncologist’s skill and judgement in setting fields on a plan, staff teamwork, and compliance to standards of care – cannot be entirely observed by patients and, thus, cannot be accurately reflected by patient experience metrics. These aspects, however, are intrinsic to good outcomes.

Conclusion

In summary, radiation oncologists need to be aware that self-reported patient assessments are a data point in quality of a physician and health care establishment. Digital rating platforms are less structured than CMS-approved surveys, but are more easily accessible and increasingly utilized. More radiation oncologists agreed than disagreed that virtual assessment sites contribute to physician burnout, and receiving inappropriate or misdirected negative reviews may contribute. Physicians who see more than the average number of consults per week may be more likely to receive negative feedback. To best ensure appropriate feedback of a physician’s capabilities as a doctor, leadership and employee alignment for enhancing the patient experience are now more important than ever.

References

- What matters most to the health care consumer? Insights for health care providers from the Deloitte 2016 Consumer Priorities in Health Care Survey. Deloitte. Accessed August 12, 2019. www2.deloitte.com/us/en/pages/life-sciences-and-health-care/articles/us-lshc-consumer-priorities-promo.html

- Betts D, Korenda L. Inside the patient journey: three key touch points for consumer engagement strategies Findings from the Deloitte 2018 Health Care Consumer Survey. Deloitte. September 25, 2018. Accessed August 12, 2019. www2.deloitte.com/insights/us/en/industry/health-care/patient-engagement-health-care-consumer-survey.html

- The value of patient experience. Hospitals with better patient-reported experience perform better financially. Deloitte. Accessed August 12, 2019. www2.deloitte.com/content/dam/Deloitte/us/Documents/life-sciences-health-care/us-dchs-the-value-of-patient-experience.pdf

- Burkle CM, Keegan MT. Popularity of internet physician rating sites and their apparent influence on patients’ choices of physicians. BMC Health Services Research. 2015;15:416-416. doi:10.1186/s12913-015-1099-2

- Widmer RJ, Maurer MJ, Nayar VR, et al. Online physician reviews do not reflect patient satisfaction survey responses. Mayo Clin Proc. 2018;93(4):453-457. doi:10.1016/j.mayocp.2018.01.021

- Ziemba JB, Allaf ME, Haldeman D. Consumer preferences and online comparison tools used to select a surgeon. JAMA Surg. 2017;152(4):410-411. doi:10.1001/jamasurg.2016.4993

- Okike K, Peter-Bibb TK, Xie KC, et al. Association between physician online rating and quality of care. J Med Internet Res. 2016;18(12):e324. doi:10.2196/jmir.6612

- Daskivich T, Luu M, Noah B, et al. Differences in online consumer ratings of health care providers across medical, surgical, and allied health specialties: observational study of 212,933 providers. J Med Internet Res. 2018;20(5):e176. doi:10.2196/jmir.9160

- Chahrour Z, Hammoud S, Hage-Diab A, et al. The extent of medical reverse paternalism in Lebanon and its ethical implications. In: 2015 International Conference on Advances in Biomedical Engineering (ICABME). 2015:246-249. doi:10.1109/ICABME.2015.7323298

- Osamor PE, Grady C. Women’s autonomy in health care decision-making in developing countries: a synthesis of the literature. Int J Womens Health. 2016;8:191-202. doi:10.2147/IJWH.S105483

- Ruhnke GW, Wilson SR, Akamatsu T, et al. Ethical decision making and patient autonomy: a comparison of physicians and patients in Japan and the United States. Chest. 2000;118(4):1172-1182. doi:10.1378/chest.118.4.1172

- Yates SW. Physician stress and burnout. Am J Med. 2020;133(2):160-164. doi:10.1016/j.amjmed.2019.08.034

- Value of patient experience: hospitals with higher patient experience scores have higher clinical quality. Deloitte. Accessed August 12, 2019. www2.deloitte.com/content/dam/Deloitte/us/Documents/life-sciences-health-care/us-value-patient-experience-050517.pdf

- Zgierska A, Rabago D, Miller MM. Impact of patient satisfaction ratings on physicians and clinical care. Patient Prefer Adherence. 2014;8:437-446. doi:10.2147/PPA.S59077

- Garcia LC, Chung S, Liao L, et al. Comparison of outpatient satisfaction survey scores for Asian physicians and non-Hispanic white. JAMA Netw Open. 2019;2(2):e190027-e190027. doi:10.1001/jamanetworkopen.2019.0027

Citation

P Z, G S, J G, V R, K H. Radiation Oncologist Perceptions and Utilization of Digital Patient Assessment Platforms. Appl Radiat Oncol. 2020;(3):24-29.

September 9, 2020